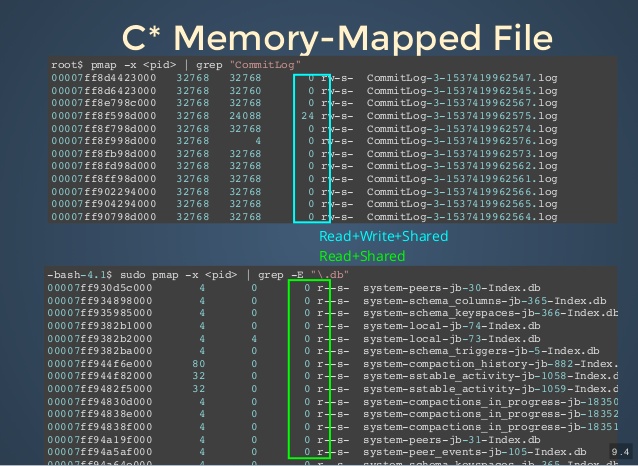

MapMode has three enumerated values READ_WRITE, READ_ONLY, PRIVATE, most of the time the one used is probably READ_WRITE, while READ_ONLY is just a restriction of WRITE, which is easy to understand, but this PRIVATE seems to have a mysterious veil on it. We notice that the first parameter of public abstract MappedByteBuffer map(MapMode mode, long position, long size), MapMode, actually has three values, and when surfing the web, we hardly find any articles explaining MapMode. On my test machine, it took 3s, which is slower than a FileChannel + 4kb buffered write, but far faster than a FileChannel writing a single byte. allocateDirect (_4kb ) for ( int i = 0 i < _4kb i ++) getChannel () ByteBuffer byteBuffer = ByteBuffer. Let’s look at FileChannel first, the following two pieces of code, who do you think is faster?ġ 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21įileChannel fileChannel = new RandomAccessFile (file, "rw" ). Here is the problem of switching between “user state” and “kernel state”, and I think this is where many people’s concepts are blurred, so I’ll sort out my personal knowledge here. kernel stateįor security reasons, the operating system encapsulates some of the underlying capabilities and provides system calls for users to use. Many argue that mmap makes one less copy than FileChannel, but I personally think we need to differentiate between scenarios.įor example, if the requirement is to read an int from the first address of a file, the two links are actually the same: SSD -> pageCache -> application memory, and mmap does not make one less copy.īut if the requirement is to maintain a 100M multiplexed buffer, and it involves file IO, mmap can be used directly as a 100M buffer, instead of maintaining another 100M buffer in the process memory (user space). The same principle of FileChannel out-of-page interrupt, both need to use PageCache as a springboard to finish reading and writing files.

if you want lazy loading to become real-time loading, you need to iterate through it once according to step=4kb the pre-reading size is determined by the OS algorithm and can be treated as 4kb by default, i.e.the mmap mapping process can be interpreted as a lazy load, only get() will trigger a page out interrupt.pageCacheīoth FileChannel and mmap reads and writes go through the pageCache, or more precisely the cache part of memory observed by vmstat, rather than the user space memory. This section details the similarities and differences between FileChannel and mmap for file IO. When you look at them, you can think of them as two tools for implementing file IO, and there is no good or bad tool per se. The coexistence of FileChannel and mmap probably means that both have their appropriate use cases, and they do. When I first learned about mmap, many articles mentioned that mmap was suitable for handling large files, but in retrospect, this is a ridiculous view, and I hope that this article will clarify what mmap is supposed to be.

get (data ) Ī big motivation for writing this article came from a lot of misconceptions about mmap in the web. MappedByteBuffer subBuffer = mappedByteBuffer. put (data ) // 读īyte data = new byte int position = 8 // 从当前 mmap 指针的位置读取 4b 的数据

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed